AI adoption is how deeply and broadly your organization actually uses AI — not just how many licenses you’ve bought.

What Is AI Governance?

AI governance is the system of visibility, policies, and guardrails that lets your organization say “yes” to AI experimentation — while managing risk appropriately.

TL;DR / Quick Definition

AI governance is the practice of creating the visibility, policies, and technical controls that allow AI experimentation to proceed across an organization while managing data, compliance, and security risks appropriately. Done correctly, it is explicitly not about locking things down. The goal of effective AI governance is to say to users: “Yes, and here’s how to do it safely,” channeling the natural, bottom-up experimentation that drives AI adoption into sanctioned paths where data is protected, compliance is maintained, and the organization retains full visibility into what is happening. For more on AI governance, see Larridin's guide, AI Governance: The Complete Enterprise Guide 2026.

Why AI Governance Matters in 2026

Three forces have converged to make AI governance an urgent enterprise priority; not a “nice to have” policy exercise, but a structural requirement for any organization using AI at scale.

The 200-tool explosion. When CIOs turn on AI monitoring, the typical enterprise discovers 200 to 300 AI tools in active use—three to five times what leadership expected. Employees aren’t being malicious. They’re experimenting, and that experimentation is healthy. But experimentation without visibility creates risks that compound silently: redundant spend, fragmented data flows, and tools with wildly varying security postures operating outside anyone’s line of sight.

Regulatory pressure is real and growing. HIPAA requires that protected health information only flows through vetted, compliant systems. An employee pasting patient notes into an unapproved AI tool creates a violation regardless of intent.

The European GDPR standard demands documented data processing controls that are impossible to demonstrate when tools operate outside IT’s visibility. SOC 2 and ISO 27001 audits require a complete inventory of systems processing sensitive data. When auditors ask key questions, such as: “What AI tools does your organization use and how is data handled?,” most CIOs today cannot give a confident, complete answer.

Data leakage risk is subtle and high-stakes. The difference between an employee using an enterprise ChatGPT account and the use of ChatGPT from a personal license is barely visible to the employee—the interface looks identical—but the data protection guarantees in each case are fundamentally different. Personal ChatGTP use includes the use of data that the employee enters for model training purposes, which is called “data exfiltration,” whereas an enterprise license usually excludes such usage. So an employee reformatting a spreadsheet on their personal account may have just exposed every customer record in their territory to the next model training run, where it might be seen by any user of the software. In the age of AI, your data is your primary moat, and governance is what protects it.

Agentic AI is raising the stakes. As organizations move toward autonomous AI agents that operate independently for hours and take real actions, such as updating systems, sending communications, and routing work, governance is no longer just about acceptable use policies. It requires operational controls for AI systems that act without human involvement at many steps. The governance frameworks built for AI assistants, which have a “human in the loop” at each stage, are insufficient for AI workers that carry out tasks independently.

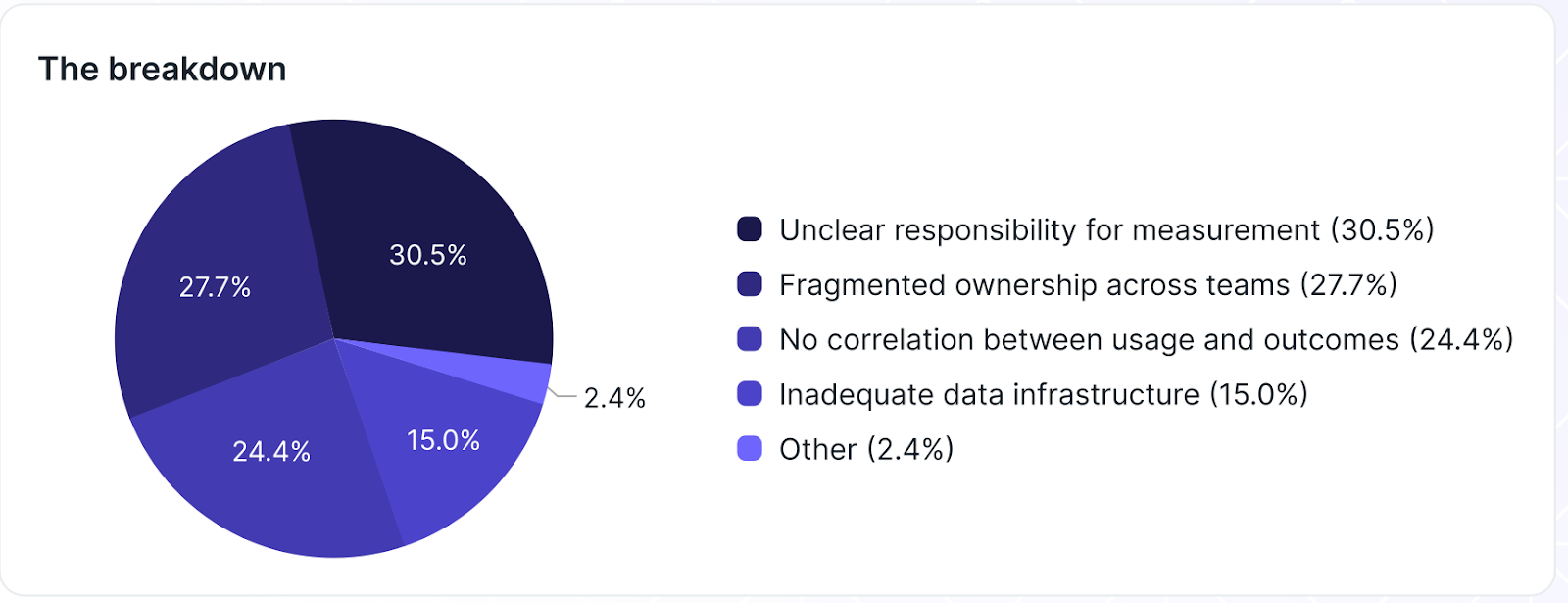

Governance contributes to AI adoption. What keeps organizations from successfully implementing AI measurement, a key supporting factor for AI governance? Larridin’s report, The State of Enterprise AI 2026, found that just four factors (shown in Fiugre 1) account for nearly all of the obstacles blocking AI measurement. Unclear responsibility and fragmented ownership led the list. The inability to connect usage and outcomes and inadequate data infrastructure (a holistic problem for using AI effectively) rounded out the list.

Figure 1. Factors blocking AI measurement.

(Source: The State of Enterprise AI 2026)

Core Framework & Key Concepts

The Governance Spectrum

Effective AI governance is not binary. It is not simply “approved” usage vs. “blocked” access. Instead, it operates on a five-level spectrum that can be tailored by tool, risk level, industry, and use case:

- Educate. Surface a message explaining the organization’s AI policies and approved alternatives. Don’t block the action; inform the user. This approach is appropriate for low-risk tools where awareness is the primary concern.

- Warn. Display an active warning requiring the user to acknowledge risk before proceeding. The user can continue, but they’ve been made explicitly aware of risks.

- Monitor. Allow usage, but log it for review. This creates the audit trail needed for compliance without blocking productive work.

- Restrict. Prevent specific actions, such as uploading files containing sensitive data to unapproved tools, while allowing other usage to continue. This is targeted restriction, not blanket blocking.

- Block. Prevent access entirely. This most severe approach is reserved for tools that present unacceptable risk based on data sovereignty, compliance, or security assessments. To be used sparingly.

The right position on this spectrum varies. A financial institution might operate near “restrict/block” for tools handling customer financial data, while leaning toward “educate/warn” for marketing tools. A technology company might favor “educate/monitor” for most tools, to preserve experimentation velocity. The key insight: governance is not about saying “no.” It is about saying “yes, and here’s how to do it safely.”

Policy-Based Data Protection at the Browser Level

Beyond knowing which tools employees are using, governance requires preventing sensitive data from leaving the organization in the first place. A written policy that says “don’t paste customer data into AI tools” is only as effective as every employee’s ability to remember and follow it in the moment, and when pasting in customer data might be a quick way to solve an immediate problem.

Browser-level data protection enforces policies in real time, evaluating what is being shared and intervening before protected data is transmitted. This means defining sensitive data categories (customer PII, financial records, source code, medical records), applying different policies per tool tier and user group, and enforcing governance at the point of action rather than relying on training sessions that employees are likely to forget.

The Six-Step Governance Program

Building a responsible AI governance program follows a structured progression:

- Establish Visibility. Discover every AI tool in use, sanctioned and unsanctioned, at the browser and desktop level where AI is actually used.

- Classify and Assess Risk. Evaluate each tool’s data handling policies, security posture, data sovereignty, and enterprise availability.

- Define Policies. Map governance responses across the spectrum (educate through block) by tool risk tier, data type, user group, and regulatory overlay.

- Deploy Data Protection. Implement real-time, policy-based enforcement at the browser level.

- Educate and Enable. Pair every control with clear communication about why the policy exists and what the approved alternatives are. Make the right thing the easy thing.

- Monitor, Benchmark, and Iterate. Track unauthorized usage rates against the 3-4% benchmark, monitor policy violation trends, and iterate continuously.

Governance as a Maturity Foundation

In the Larridin AI Maturity Model, governance is not a late-stage concern — it is the very first stage. Stage 1: Visibility and Controls requires full AI tool discovery, a basic governance framework, foundational measurement infrastructure, and a risk baseline. Most enterprises are at Stage 1 or haven’t fully completed it. The most common mistake is assuming that deploying Copilot licenses or approving a ChatGPT Enterprise subscription means you have visibility. Deployment is not visibility.

At the other end of the maturity spectrum, Stage 5: Agentic Deployment demands an entirely new governance layer. Autonomous AI agents that run independently for hours, taking real actions in production workflows, require audit trails for every action, quality monitoring, clear escalation paths, and operational controls that go far beyond acceptable use policies. Governance must evolve as AI capabilities evolve — from governing tools to governing autonomous workers.

The Role of "Shadow AI"

The term "shadow IT" describes the unsanctioned use of hardware devices, software, SaaS applications and more, within a company, but not approved by the IT department. When the iPhone first appeared, it was wildly popular, but rarely approved for corporate use; many corporate employees still ended up using their iPhones for work purposes.

"Shadow AI" does not (yet) include hardware, but it's similar to "shadow IT": the unsanctioned use of LLMs and other AI-powered software within a company. And, as with "shadow IT," the answer is rarely to simply shut it down.

In fact, unsanctioned, "shadow" AI can play an important role in companies. It's a way for employees to experiment with, and adopt suitable AI tools before their companies are ready.

As you'll see in our complete Guide, unsanctioned AI can play an important role in faster, more effective adoption of AI in your organization. Once you have visibility into all the AI being used, "shadow" AI is no longer something to be feared; instead, it simply becomes just another aspect of AI that needs to be governed.

Common Misconceptions

“AI governance means blocking tools.” This is the most damaging misconception. Organizations that respond to AI tool proliferation by blocking everything kill the experimentation culture that drives adoption and proficiency. Governance exists to channel experimentation into safe paths, not to stop it. Every “no” should come with a “yes, and here’s how.”

“The goal is zero unauthorized AI usage.” Zero unauthorized usage means you’ve locked everything down so tightly that experimentation is dead. A healthy target is approximately 3-4% of total AI usage occurring in unauthorized tools; enough usage to allow discovery and innovation, but kep small enough to allow monitoring, with intervention where needed. If the percentage rises, it signals that sanctioned tools aren’t meeting employee needs, which is a strategic insight, not a governance failure.

“We wrote a policy, so we’re governed.” Governance is not a document. It is continuous infrastructure — monitoring, enforcement, measurement, and iteration. A policy written once and distributed via email is governance theater. Effective governance is enforced by technology at the point of action, tracked through ongoing metrics, and updated as the AI landscape evolves. The tool landscape shifts weekly; governance must keep pace.

“Governance is an IT problem.” Governance spans IT, security, compliance, legal, procurement, and business leadership. It requires cross-functional ownership, because the risks — data leakage, regulatory exposure, wasted spend, missing audit trails — span every function. The best governance programs are jointly owned and treat governance metrics as first-class KPIs alongside adoption and proficiency.

How It Connects

AI governance is tightly linked to several related concepts across the enterprise AI landscape:

- Shadow AI is the problem that governance solves. Shadow AI is what happens when employees experiment with AI tools outside IT’s visibility. Governance provides the framework, with visibility, classification, spectrum-based policies, that transforms ungoverned experimentation into managed exploration.

- AI Adoption and governance are complementary forces. Governance that is too restrictive kills adoption. Adoption without governance creates unmanaged risk. The organizations that win are the ones that get the balance right, enabling broad experimentation within a framework of responsible controls.

- AI Execution Intelligence is the broader discipline that makes governance data-driven. Governance metrics, such as the rate of unauthorized usage, policy violation trends, tool inventory completeness, and governance coverage, feed into the execution intelligence layer that connects AI activity to risk management and business outcomes.

For comprehensive treatment of governance strategy and implementation, see the full Shadow AI Guide (primary) and the AI Maturity Model (governance as Stage 1 and Stage 5). For the complete picture on AI governance, see Larridin's guide, AI Governance: The Complete Enterprise Guide 2026.

Larridin gives enterprises complete visibility into their AI tool landscape — sanctioned and unsanctioned — with browser-level monitoring, real-time data protection, and governance analytics segmented by team, department, and risk level. If your organization cannot confidently answer “what AI tools are in use and how is data protected?” — Larridin closes that gap.