Adoption tells you who’s using AI. Proficiency tells you who’s getting value from it—and the gap between the two is where enterprises are leaving the most on the table.

The Proficiency Gap Is the Biggest Untold Story in Enterprise AI

Enterprise AI has an adoption problem—but not the one most people think. The real problem isn’t that people aren’t using AI. It’s that most of them aren’t using it well.

The numbers tell a stark story.

OpenAI’s 2025 State of Enterprise AI report revealed a 4x productivity gap between AI power users and typical employees. Not 4%—four times. The same tools, the same organization, the same access—and a small group of power users is extracting six times more value than everyone else.

Meta put concrete numbers on this divide. During its Q4 2025 earnings call, the company reported a 30% increase in output per engineer overall from AI tools. But the power users of Meta’s internal AI coding tools? Their output rose 80% year-over-year. As Mark Zuckerberg noted, “projects that used to require big teams can now be accomplished by a single, very talented person.” The difference between average and exceptional isn’t marginal—it’s transformational.

EY’s 2025 Work Reimagined Survey, spanning 15,000 employees across 29 countries, found that 88% of employees use AI in their daily work. That sounds like an adoption success story. Except that usage is “mostly limited to basic applications, such as search and summarizing documents.” Only 5% are using AI in advanced ways to transform the way they work. The vast majority of the workforce has adopted AI in the most superficial sense possible.

As Business Insider reported, “AI adoption in a large enterprise can be complicated. Competence with AI tools can be very uneven across the board, and security risks can mount without clear guidelines on AI usage.” Adoption is complicated. Competence is uneven. And the gap between the two is where enterprises are losing value.

This is the proficiency gap—and it’s the biggest untold story in enterprise AI.

Why Adoption Without Proficiency Is a Losing Strategy

Sam Altman, speaking at Sequoia Capital’s AI Ascent event, described three distinct buckets of how people use AI:

Bucket 1: The Google Replacement. “Older people use ChatGPT as a Google replacement,” Altman said. These users treat AI as a slightly better search engine. They ask simple questions and get simple answers. The productivity gain is real but modest—maybe 5-10%. This is where the vast majority of enterprise AI users sit today.

Bucket 2: The Augmented Worker. “People in their 20s and 30s use it like a life advisor,” Altman described. In the enterprise context, these are the users who have integrated AI into their actual work—using it to draft, analyze, brainstorm, iterate, and refine. This is Steve Jobs’ “bicycle for the mind” come to life. They’re meaningfully more productive because AI is amplifying their existing capabilities.

Bucket 3: The AI-Native. “People in college use it as an operating system,” Altman observed. They have “complex ways to set it up, to connect it to a bunch of files,” with “fairly complex prompts memorized in their head.” In the enterprise, these are the people who approach every problem AI-first. They’re not just doing their old job faster—they’re doing things they never would have attempted before. They’re reimagining what’s possible.

The productivity curve across these three buckets isn’t linear. It’s exponential. The gap between a Bucket 1 user and a Bucket 3 user is far steeper than the normal distribution of productivity you see across any workforce. People at the top of the AI proficiency curve are not incrementally better—they are operating in a fundamentally different mode.

Meta’s own data proves this at scale. The 30% average improvement represents the blended impact across all three buckets. But the 80% improvement among power users—nearly three times the average—shows what happens when employees reach Bucket 2 and Bucket 3. And Zuckerberg’s observation that single individuals are now accomplishing what used to require entire teams? That’s Bucket 3 in action.

For enterprises, the implication is clear: measuring adoption alone is measuring the wrong thing. Having 90% of your workforce in Bucket 1 is fundamentally different from having 30% in Bucket 2 and 10% in Bucket 3—even though both scenarios might report the same “adoption rate.” The entire ROI of your AI investment depends on where your people fall on the proficiency spectrum, not whether they’ve logged in.

This is why AI proficiency—not just AI adoption—must become a core enterprise metric.

What AI Proficiency Actually Means

AI proficiency is not a single skill. It’s a multi-dimensional capability that spans how people think about AI, interact with it, select tools, and ultimately, whether they can get AI to act autonomously on their behalf.

At its core, proficiency measures whether a user truly understands what AI can do—and can push its boundaries to deliver real results. The difference between a naive user and a proficient one isn’t typing speed or technical knowledge. It’s model intuition.

A naive user might type: “Build me an app like Airbnb.” That’s expecting magic—treating AI as a wish-granting machine, with no understanding of what the model needs to produce a useful result.

A proficient user approaches the same goal entirely differently. They brainstorm with the model first. They plan what can realistically be built. They provide detailed context—the target audience, the constraints, the technical stack, the priorities. They engage in back-and-forth dialogue, refining the output iteratively. They might use multiple models—one for architecture decisions, another for code generation, a third for copy and design. The result isn’t just better output—it’s a fundamentally different relationship with the technology.

The Nine Dimensions of AI Proficiency

Larridin measures AI proficiency across nine dimensions that together capture the full spectrum of how effectively someone uses AI:

1. Model Intuition

Does the user have an intuitive understanding of what AI models can and cannot do? Can they calibrate their expectations appropriately—neither underestimating the model’s capabilities nor expecting magic? Do they understand when to push for more and when the model has reached its limits?

Model intuition is the foundation of proficiency. Without it, every other skill is compromised. A user with strong model intuition knows that the quality of the output is directly proportional to the quality of the input, and they act accordingly.

2. Interaction Sophistication

How does the user communicate with AI? Proficiency here ranges from single-shot queries (“write me an email”) to rich, multi-turn conversations where the user provides context, constraints, examples, and iterative feedback.

The most proficient users treat AI as a thinking partner. They engage in structured dialogue—brainstorming, then planning, then executing, then refining. They provide the model with the context it needs: background information, audience details, tone preferences, examples of what “good” looks like, and explicit constraints on what to avoid.

This dimension also includes the ability to recover from poor outputs—recognizing when the model has gone off track and knowing how to steer it back, rather than abandoning the conversation or accepting subpar results.

3. Multi-Model Fluency

Does the user leverage multiple AI models, and do they understand the strengths and weaknesses of each? The AI landscape is not monolithic—different models excel at different tasks.

A proficient user might use Claude for analytical reasoning and long-form writing, GPT for creative brainstorming, Gemini for research synthesis, and a specialized model for domain-specific tasks. They cross-reference outputs across models for important decisions. They understand which model to reach for based on the task at hand—and they switch fluidly between them.

Multi-model fluency is a strong signal of advanced proficiency because it requires both breadth of experience and the judgment to match tools to tasks.

4. Tooling Sophistication

Beyond conversational AI, does the user leverage advanced capabilities? This includes:

- MCP (Model Context Protocol)—connecting AI to external data sources and tools

- Connectors and integrations—linking AI to the systems where their work lives

- Custom instructions and memory—personalizing AI to their specific context and preferences

- API access and automation—using AI programmatically, not just through chat interfaces

- Advanced features—code execution, file analysis, web browsing, image generation, and other capabilities beyond basic chat

A user who only interacts through a chat window has a ceiling on their proficiency. The most proficient users leverage the full tooling ecosystem to extend what AI can do for them.

5. Right Tool for the Right Job

Beyond LLMs, does the user select the appropriate specialized tool for the task at hand? This is about the full AI ecosystem, not just chatbots.

A proficient user reaches for Cursor or Windsurf for code—not ChatGPT. They use ElevenLabs for voice generation, Midjourney or Flux for images, Runway for video, and Perplexity for research. They understand that a purpose-built AI tool usually outperforms a general-purpose LLM for specialized tasks.

This dimension also includes knowing when not to use AI—recognizing tasks where human judgment, creativity, or domain expertise is more effective than AI assistance.

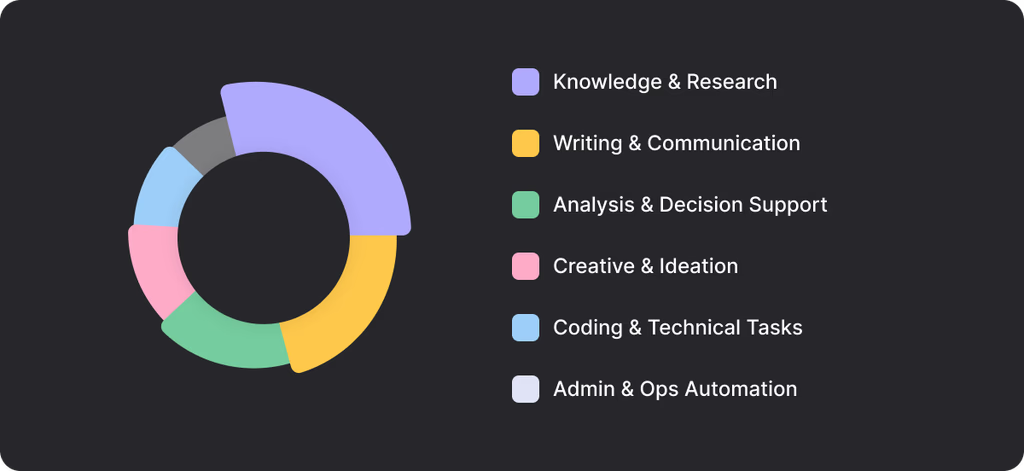

6. Use Case Diversity

Is the user applying AI across a broad range of work activities, or only for one narrow task? Proficiency is reflected in breadth of application:

- Knowledge and research—synthesizing information, literature review, competitive analysis

- Writing and communication—emails, reports, presentations, documentation

- Analysis and decision-making—data interpretation, scenario modeling, strategic thinking

- Creative work—brainstorming, ideation, content creation, design

- Technical work—coding, debugging, architecture, system design

- Operations—workflow automation, process optimization, project planning

A user who applies AI only for email drafting is at a very different proficiency level than one who uses it across research, analysis, writing, coding, and creative tasks within a single week.

7. New Tool Adoption Speed

The AI landscape evolves continuously. New tools, new features, and new capabilities emerge weekly. Proficiency includes the ability—and willingness—to adopt new tools quickly.

Has the user adopted browser-based AI tools? Desktop agents like Claude’s computer use or Cowork? New interaction paradigms such as voice mode or canvas? When a new capability launches, how quickly do they experiment with it and integrate it into their workflow?

Fast adopters are typically the same people who become power users. Slow adopters fall behind not just in tooling but in the mental models needed to use AI effectively.

8. Agentic Capability

This is the frontier of AI proficiency—and it’s what separates the truly AI-native from everyone else.

Can the user move beyond conversation to delegation? Can they set up AI agents to execute complex, multi-step tasks autonomously? The proficiency spectrum doesn’t end at “great at prompting.” The highest levels involve orchestrating AI to do work independently:

- Setting up an AI coding agent to refactor an entire codebase

- Delegating a research workflow that gathers, synthesizes, and presents findings

- Configuring agentic workflows that execute multi-step business processes

- Using AI agents that operate across multiple tools and systems to complete complex tasks

The distinction is between talking to AI and getting AI to act. A user who can set up Claude Code to autonomously handle a complex development task, or orchestrate multiple agents across research, drafting, and scheduling, has reached a proficiency level that most organizations haven’t even begun to measure.

9. Habit Consistency

Proficiency isn’t demonstrated in a single impressive session—it’s sustained behavior over time. Is the user consistently applying all of the above dimensions week after week? Or do they spike after a training session and then revert to basic usage?

True proficiency is habitual. It’s the user who defaults to AI-assisted workflows automatically, not the one who remembers to use AI occasionally. Habit consistency separates genuine capability from occasional experimentation.

The AI Proficiency Spectrum

These nine dimensions map to a proficiency spectrum that describes where any individual—or any team, department, or organization—sits in their AI journey:

Level 1: The Search Replacer

Uses AI as a slightly better Google. Asks simple, single-shot questions. Accepts the first output. Rarely follows up or iterates. Uses one tool (usually ChatGPT or Copilot). AI saves them a few minutes per day on information retrieval.

Productivity impact: 5-10% on limited tasks

Level 2: The Task Automator

Uses AI for specific, repetitive tasks—drafting emails, summarizing documents, generating basic code. Better at providing context than Level 1 but still treats AI as a utility. Uses one, maybe two tools. Doesn’t iterate much.

Productivity impact: 15-25% on targeted tasks

Level 3: The Augmented Worker

AI is a genuine work partner. Multi-turn conversations with rich context. Iterates on outputs. Starting to use multiple models. Applies AI across several use cases—writing, analysis, research. Beginning to explore advanced features and integrations.

Productivity impact: 30-50% across a range of activities

Level 4: The Power User

Fluent across multiple models and tools. Instinctively uses the right tool for the right job. Leverages advanced capabilities (MCP, connectors, custom instructions, APIs). Diverse use cases across their entire workflow. Adopts new tools quickly. AI is embedded in how they think and work—not just how they execute.

Productivity impact: 50-100%+—doing significantly more than their pre-AI output

Level 5: The AI-Native Orchestrator

Operates at the agentic frontier. Not just using AI but delegating to it. Sets up autonomous workflows. Orchestrates multiple agents across complex tasks. Reimagines what’s possible—taking on projects and responsibilities they never would have attempted before. Approaches every problem AI-first and designs their entire work process around AI capabilities.

This is the person Zuckerberg described: the “single, very talented person” accomplishing what used to require a big team.

Productivity impact: Transformational—redefining the scope of what one person can achieve

Most enterprise employees today are at Level 1 or 2. The organizations that can move a meaningful percentage of their workforce to Level 3 and above will capture disproportionate value from their AI investments. The organizations that develop Level 5 talent will have capabilities their competitors simply cannot match.

Why Proficiency Is a Moving Target

Here’s the uncomfortable truth about AI proficiency: it’s not a fixed standard. What qualified as “advanced” six months ago is “baseline” today.

The AI landscape evolves faster than any technology in history. Models get smarter every quarter. New capabilities—agentic workflows, computer use, multi-modal reasoning, real-time collaboration—emerge constantly. New tools launch weekly. The frontier of what’s possible moves relentlessly forward.

This means that a static proficiency assessment is nearly useless. An employee who was a “power user” in mid-2025—great at prompt engineering with GPT-4—might be a “task automator” by early 2026 standards if they haven’t adopted agentic workflows, multi-model strategies, and new tool categories.

At Larridin, we address this by recalibrating our proficiency definitions every 30 days. This ensures that proficiency scores reflect current expectations, not historical ones. An organization’s proficiency data is always measured against the current state of what’s possible—not what was possible when they first deployed their AI tools.

This temporal recalibration has several important implications:

Proficiency requires continuous learning. Unlike most skills, where you can reach a level and maintain it, AI proficiency demands ongoing investment. Employees must keep experimenting, keep adopting new tools, and keep pushing their usage forward—or their proficiency will decline,while those who are always learning move steadily ahead.

Training programs must be evergreen. A one-time AI training program is almost worthless. By the time employees absorb the material, the frontier has moved. Effective AI enablement requires continuous, updated training that evolves with the technology.

Benchmarks must be dynamic. Comparing your organization’s proficiency to a fixed standard is misleading. Benchmarks should be recalibrated against the current best practices and tool capabilities, not against an arbitrary baseline set months ago.

Measuring Proficiency Across the Enterprise

Measuring AI proficiency at scale requires a fundamentally different approach than measuring adoption. Adoption is a binary signal—are they using it or not? Proficiency is a continuous, multi-dimensional assessment that requires observing how people use AI, not just whether they do.

The Measurement Challenges

You can’t survey your way to proficiency data. Self-reported proficiency is notoriously unreliable. The Dunning-Kruger effect is alive and well in AI—beginners overestimate their abilities, while experts underestimate theirs. Any proficiency measurement that relies on self-assessment will produce distorted results.

Vendor dashboards don’t capture proficiency. Microsoft’s Copilot dashboard can tell you that someone used Copilot 47 times this month. It cannot tell you whether those 47 interactions were sophisticated, multi-turn conversations that produced transformational output—or 47 instances of dismissing a basic autocomplete suggestion.

It requires behavioral observation. True proficiency measurement requires understanding the quality and sophistication of AI interactions—not just their frequency. This means analyzing patterns of usage across tools, sessions, and time to build a composite picture of how each user engages with AI.

What to Measure

An effective AI proficiency measurement program captures:

- Interaction patterns—Are users engaging in multi-turn, iterative conversations? Or single-shot queries?

- Tool portfolio—How many distinct AI tools and models does each user engage with? Are they matching tools to tasks?

- Feature utilization—Are users leveraging advanced capabilities, or only basic chat?

- Use case breadth—Across how many distinct work activities is AI being applied?

- Adoption velocity—When new tools or features become available, how quickly are users engaging with them?

- Consistency—Is sophisticated usage sustained over time, or sporadic?

- Agentic engagement—Are users setting up and delegating to AI agents for autonomous work?

Segmenting Proficiency

Just as with adoption, proficiency data becomes actionable when segmented across organizational dimensions:

- By department—Which teams have the highest concentration of Level 4-5 users? Which are stuck at Level 1-2?

- By role—How does proficiency vary across engineers, marketers, analysts, salespeople, and managers?

- By seniority—Are individual contributors more proficient than managers? Are executives leading by example?

- By tenure—Are newer employees (potentially digital natives) more proficient? Or are experienced employees who understand the business finding more valuable use cases?

- By geography—Are certain offices or regions ahead or behind?

This segmentation reveals patterns that drive targeted intervention. If your marketing team is at Level 1-2 while engineering is at Level 3-4, you know exactly where to focus your next enablement push. If individual contributors are more proficient than their managers, you’ve identified a cultural barrier—managers may be the bottleneck to broader team-level proficiency.

Figure 1 shows ROI confidence by department (is AI paying off?) and visibility confidence (am I seeing our team's AI use?). For our initial purposes here, focus on the ROI confidence column.

Figure 1. ROI confidence and visibility confidence.

(Source: The State of Enterprise AI 2026)

In Figure 1, the leading department for ROI is IT, where tools such as Claude Code are getting a lot of attention. The next department for ROI is Legal, which a) has large needs for research, where LLMs offer value and b) has several specialized tools that are getting less attention broadly than Claude Code, but that are getting good reviews from customers. These are the kinds of considerations that will help you plan and execute your progression from no AI, to AI adoption, to AI proficiency.

Visibility confidence, the next column in the figure, tends to be high where a business has licensed a tool for many users in a department and is confident it can track that. A warning, though: The fact that you have licensed one tool in a department doesn’t guarantee that there isn’t unsanctioned (“shadow”) AI usage, where you have less visibility, alongside that.

Building an AI Proficiency Program

Moving your organization up the proficiency spectrum requires more than training sessions. It requires a systematic approach that combines measurement, enablement, culture, and incentives.

Identify Your Champions

Every organization has a population of Level 4-5 users—your AI-native power users. These people are your most valuable asset in driving proficiency. Identify them through measurement data, not self-nomination. Then leverage them:

- As mentors—Pair them with Level 1-2 users for hands-on coaching

- As content creators—Have them document and share their workflows, prompts, and tool configurations

- As proof points—Use their results (quantified) to demonstrate what proficiency makes possible

- As cultural signals—Celebrate and recognize their achievements to signal that AI proficiency is valued

Make It Safe to Experiment

Proficiency develops through experimentation, and experimentation requires psychological safety. If employees fear that using AI “incorrectly” will be judged or punished, they’ll stay at Level 1 forever.

Create structured environments for experimentation—hackathons, AI happy hours (as Zapier does), dedicated “AI exploration” time, sandbox environments where employees can try new tools without risk.

Move Beyond One-Time Training

Replace one-time AI training with continuous enablement:

- Monthly tool briefings—What’s new, what’s changed, what’s possible now that wasn’t last month

- Use case libraries—Curated, role-specific examples of effective AI usage that are updated regularly

- Proficiency challenges—Structured programs that guide employees through progressively more sophisticated AI use cases

- Peer learning networks—Forums where employees share tips, workflows, and discoveries

Align Incentives

Meta’s decision to tie performance reviews to AI-driven impact is the most aggressive example, but the principle applies broadly. If you want proficiency to increase, recognize and reward it:

- Include AI proficiency in performance conversations

- Highlight proficiency leaders in team meetings and company communications

- Create “AI champion” designations for employees who reach Level 4-5

- Use proficiency data to identify high-potential employees for stretch opportunities

Measure Continuously and Recalibrate

Establish a continuous measurement cadence—not quarterly assessments, but ongoing behavioral data that shows where each employee sits on the proficiency spectrum at any given time. Recalibrate proficiency benchmarks regularly (monthly is ideal) to account for the evolving AI landscape.

Use the data to track progress, identify bottlenecks, and demonstrate ROI. The connection between rising proficiency scores and business outcomes (productivity, quality, speed, innovation) is the ultimate justification for continued AI investment.

Common Mistakes in AI Proficiency Programs

AI proficiency tends to mask to tasks, which may cut across job titles and departments. Figure 2 (adapted from Larridin’s Scout product) shows how the software reports on what tasks AI is being used for. By showing task mapping, Scout helps managers find where AI might help specific individual contributors, whether they are most, some, or even just one of the members of a particular team.

Figure 2. AI usage by task, from Larridin Scout.

(Source: Larridin website)

Confusing Adoption with Proficiency

The most pervasive mistake. A 90% adoption rate means nothing if 85% of those users are Level 1 search replacers. Adoption is necessary but wildly insufficient. Don’t let high adoption numbers lull you into thinking your AI investment is working.

Using Static Proficiency Definitions

Defining proficiency once and measuring against that fixed standard produces increasingly meaningless data as the AI landscape evolves. What was “advanced” in January 2025 is “basic” in early 2026. Recalibrate continuously.

Relying on Self-Assessment

People are terrible at assessing their own AI proficiency. Beginners think they’re advanced. Experts underestimate themselves. Use behavioral data, not surveys, to measure proficiency.

One-Size-Fits-All Training

A Level 1 user and a Level 3 user need completely different interventions. Sending everyone through the same training program wastes time for advanced users and overwhelms beginners. Segment your enablement efforts based on proficiency data.

Ignoring the Agentic Frontier

Most proficiency programs focus exclusively on conversational AI skills—how to write better prompts, how to iterate effectively. This is important but incomplete. The frontier of proficiency is agentic: delegating complex work to AI systems that execute autonomously. Organizations that don’t include agentic capabilities in their proficiency framework are training for yesterday’s AI, not tomorrow’s.

Measuring Individuals but Not Teams

AI proficiency has network effects. A team where multiple members are Level 3+ can accomplish far more than the sum of their individual capabilities because they share techniques, build on each other’s workflows, and create AI-augmented team processes. Measure and optimize for team-level proficiency, not just individual scores.

The Proficiency Imperative

The data is unambiguous. The gap between AI-proficient employees and everyone else is large, growing, and steeper than any normal productivity distribution. Meta’s 30% vs. 80% gap will only widen. OpenAI’s 6x engagement difference will only grow as AI tools become more capable.

For enterprises, this creates both a massive opportunity and an urgent risk. The opportunity: organizations that systematically build AI proficiency across their workforce will operate at a fundamentally different level of productivity and capability. The risk: organizations that focus only on adoption—getting tools deployed, getting people logged in—will find themselves with expensive AI subscriptions and a workforce stuck at Level 1.

The move from adoption to proficiency is the next frontier of enterprise AI strategy. The organizations that make this move first will set the pace. The ones that wait will spend the next several years trying to catch up.

FAQs: AI Proficiency Guide

Q: What is the AI proficiency gap, and why does it matter more than adoption rates?

The proficiency gap is the difference between employees who merely use AI and those who extract real value from it, and it’s the biggest untold story in enterprise AI. OpenAI’s 2025 State of Enterprise AI report revealed a 4x productivity gap between power users and typical employees using the same tools, with the same access, in the same organization. Meta put concrete numbers on the divide: while AI tools produced a 30% output increase for the average engineer, power users saw an 80% improvement year-over-year.

EY’s 2025 Work Reimagined Survey, spanning 15,000 employees across 29 countries, found that 88% of employees use AI daily, but only 5% use it in advanced ways. This means most organizations have high adoption rates that mask dangerously low proficiency. As the Guide puts it, having 90% of your workforce at Level 1 is fundamentally different from having 30% at Level 2 and 10% at Level 3, even though both scenarios might report the same “adoption rate.” The entire ROI of an AI investment depends on where people fall on the proficiency spectrum, not whether they’ve logged in.

Q: What does the AI proficiency spectrum look like, from beginner to AI-native?

The Guide defines five levels on the AI proficiency spectrum:

-

Level 1, the Search Replacer, treats AI as a slightly better Google: simple questions, first-output acceptance, 5–10% productivity gain on limited tasks.

-

Level 2, the Task Automator, uses AI for specific repetitive tasks such as such email drafting and document summarization, achieving 15–25% improvement on targeted work.

-

Level 3, the Augmented Worker, makes AI a genuine work partner through multi-turn conversations, iterative refinement, and multiple models, reaching 30–50% productivity gains across a range of activities.

-

Level 4, the Power User, is fluent across multiple models and tools, smoothly selects the right tool for each job, leverages advanced capabilities such as such MCP and APIs, and achieves 50–100% more output than their pre-AI baseline.

-

Level 5, the AI-Native Orchestrator, operates at the agentic frontier: not just using AI, but delegating to it, setting up autonomous workflows, and reimagining what a single person can accomplish.

As Mark Zuckerberg observed, “projects that used to require big teams can now be accomplished by a single, very talented person.” Most enterprise employees today, however, sit only at Level 1 or 2, which is why closing the proficiency gap represents such an outsized opportunity.

Q: How is AI proficiency measured across an enterprise?

Measuring AI proficiency is fundamentally harder than measuring adoption. You can’t survey your way to proficiency data: the Dunning-Kruger effect means beginners overestimate their abilities, while experts underestimate theirs. And vendor dashboards can tell you someone used Copilot 47 times this month, but not whether those interactions were sophisticated multi-turn conversations or 47 dismissed autocomplete suggestions.

Larridin measures AI proficiency across nine dimensions:

- Model intuition (calibrating expectations of what AI can do),

- Interaction sophistication (multi-turn dialogue vs. single-shot queries)

- Multi-model fluency (leveraging different models for different strengths)

- Tooling sophistication (MCP, connectors, APIs)

- Right tool for the right job (specialized tools vs. general-purpose chatbots)

- Use case diversity (breadth of application across work activities)

- New tool adoption speed

- Agentic capability (delegating complex tasks to autonomous AI)

- Habit consistency (sustained behavior over time)

Because the AI landscape evolves so rapidly, Larridin recalibrates its proficiency definitions every 30 days to ensure that scores reflect current expectations, not historical ones.

Q: Why is AI proficiency a moving target, and what does that mean for training programs?

What qualified as “advanced” AI proficiency six months ago might qualify only as “baseline” today. Models get smarter every quarter; new capabilities such as such agentic workflows and computer use emerge constantly; and new tools launch weekly. An employee who was a power user in mid-2025:, skilled at prompt engineering with GPT-4, might qualify only as a task automator by early 2026 standards if they haven’t adopted agentic workflows, multi-model strategies, and new tool categories.

This has direct implications for how organizations build AI proficiency programs. One-time training is almost worthless; by the time employees absorb the material, and start to use it, the frontier may have moved.

Effective enablement requires continuous, updated training: monthly tool briefings on what’s new and what’s changed, regularly refreshed use case libraries with role-specific examples, structured proficiency challenges that guide employees through progressively more sophisticated use cases, and peer learning networks where employees share discoveries.

Organizations should also set aside and protect dedicated experimentation time: AI happy hours, hackathons, and sandbox environments, for example. All because proficiency develops through hands-on exploration, not passive instruction.

Q: What are the most common mistakes organizations make in AI proficiency programs?

The Guide identifies six recurring pitfalls. The four most common challenges are:

- The most pervasive is confusing adoption with proficiency; a 90% adoption rate means nothing if 85% of those users are Level 1 search replacers.

- Using static proficiency definitions is another trap, since what was “advanced” in early 2025 is “basic” a year later.

- Relying on self-assessment produces distorted results, because people are consistently poor at gauging their own AI capabilities.

- One-size-fits-all training wastes time for advanced users while overwhelming beginners; a Level 1 user and a Level 3 user need completely different interventions.

Two less obvious mistakes round out the list:

- Ignoring the agentic frontier means training only for conversational AI skills such as such prompt engineering, when the real proficiency edge increasingly lies in delegating complex work to autonomous AI systems.

- Measuring individuals, but not teams, is the opposite of the right approach. Focusing on individuals misses the network effects of AI proficiency; a team where multiple members are at Level 3 or above can accomplish far more than the sum of their individual capabilities, because they share techniques, build on each other’s workflows, and create AI-augmented team processes. Effective proficiency programs segment enablement by level, measure at both the individual and team level, and recalibrate continuously.

All Guides and Workbooks

#3 AI Proficiency Guide (this Guide)

Larridin measures AI proficiency across nine dimensions, recalibrated every 30 days, giving enterprises a real-time view of how effectively their workforce is using AI—and exactly where to invest to move the needle.

Learn how Larridin measures AI proficiency