Achieving ROI with AI requires efficiency, and having tons of unproductive AI tools in use just adds friction and ups your security risks. See the Larridin Guide to ROI with Enterprise AI and our blog post, What is “Shadow” AI, for context.

Based on our experience here at Larridin, your enterprise company is likely to have somewhere between 200 and 300 AI tools in active use. And if you’re like other large companies, you only know about 60 of them.

That means the rest are unsanctioned AI tools being used by your employees on personal licences. If these unknown tools are productive, you’re incurring unneeded security risk, due to the personal license, and failing to scale success across your employee base. If they’re unproductive, you’re incurring the same risks, and adding significant drag to your AI implementation efforts.

Here’s how to get control, without killing the employee-driven experimentation that makes AI valuable.

Interested in developer productivity? See Larridin's Developer Productivity Overview.

The Scale of the Problem

The average enterprise manages more than 275 SaaS applications. AI tools are the fastest-growing category within that portfolio, and the least governed.

When CIOs estimate their organization’s AI tool footprint, the typical answer they give is 60 to 70 tools. Then they deploy monitoring, and they usually find that the real number is 200 to 300.

That gap is not a rounding error. It is a governance crisis with compounding financial, security, and compliance consequences, along with a lot of lost opportunity to improve your operations through successful AI tool use.

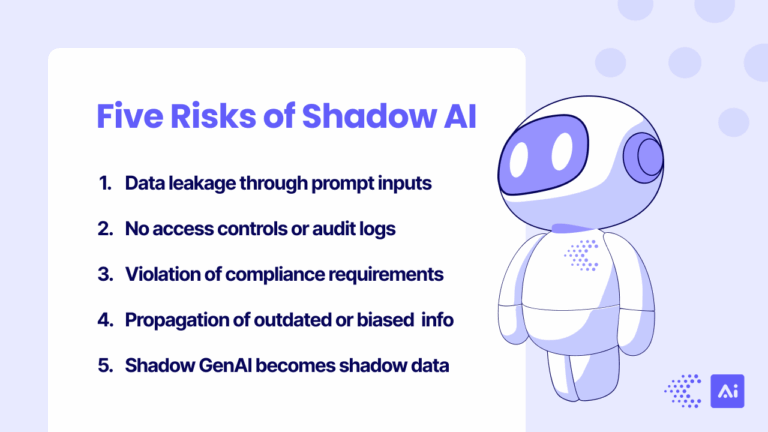

Nearly half of employees admit to using AI tools without employer approval, according to multiple industry surveys. More than a third are sharing confidential enterprise data with AI systems that fall outside company oversight: research datasets, employee records, financial information flowing into tools IT has never vetted. One in five organizations has already experienced a breach tied to shadow AI. Figure 1 shows major risks of shadow AI.

Figure 1. Major risks of shadow AI relate to data security and governance.

(Figure courtesy Concentric AI.)

The financial waste is equally severe. Organizations consistently spend 3-5x more than they think on AI tools, once you account for individual subscriptions expensed across departments, overlapping solutions purchased by different teams, and free-tier tools quietly exfiltrating your company data. Zylo’s SaaS Management Index found that 53% of all paid-for SaaS licenses go unused, costing the average enterprise over $21 million annually. For AI tools, where adoption is more fragmented and less centrally managed than traditional SaaS, that waste percentage is likely higher.

And, with the new wave of AI-powered tools, the problem is only accelerating. McKinsey’s 2025 State of AI report reported that the share of AI solutions purchased, rather than built internally, jumped from 47% to 76% between 2024 and 2025. More vendors, more categories, more overlap, more sprawl. Gartner projects that 40% of enterprise applications will feature task-specific AI agents by the end of 2026, up from less than 5% in 2025. Each such application adds another layer of tools to manage.

Why AI Sprawl Is Different From SaaS Sprawl

CIOs have fought tool sprawl before. The SaaS explosion of the 2010s taught IT leaders hard lessons about unmanaged procurement and redundant licenses. But AI tool sprawl is a fundamentally different problem, and treating it like traditional SaaS rationalization will leave critical risks unaddressed.

Data flows are the risk, not just using the wrong license. A redundant project management tool wastes money. A redundant AI tool wastes money and creates a new data exposure surface. Every AI tool employees interact with is a tool they are potentially feeding proprietary data to: customer records, financial projections, source code, strategic plans. Even the distinction between enterprise and personal accounts for the same tool (same URL, same interface) creates materially different risk profiles, based on training data policies, and using personal accounts is a governance exposure.

Model risk compounds across tools. Each AI tool introduces its own model, its own biases, its own hallucination patterns, and its own data retention policies. Ten redundant AI writing tools don’t just cost 10x; they introduce 10 different sets of model risk, 10 different terms of service, and 10 different answers to “Where does our data go?” They also fragment your efforts to share and promote best practices.

Proliferation is faster. A traditional SaaS tool required procurement approval, implementation, training, and rollout. An AI tool requires a browser tab and an email address. Employees can adopt a new AI tool in under two minutes. The speed of proliferation outpaces any governance process designed for the SaaS era.

The landscape hasn’t settled. As the AI Adoption Guide documents, we don’t yet know which LLM, if any, will be the definitive enterprise standard. Every tool category: coding, writing, design, data analysis, research; has multiple credible competitors, with new entrants launching weekly. This isn’t a mature market where consolidation onto a single vendor is obvious. Premature lock-in is its own risk.

The Rationalization Framework

Effective AI tool rationalization follows five stages. Skip any one of them and the effort either stalls, or produces decisions you may end up reversing in six months.

1. Discover: Build a Complete Inventory, Including “Shadow” AI

You cannot rationalize what you cannot see. And with AI tools, what you can’t see is likely to be the majority of your portfolio.

A complete discovery process goes well beyond the procurement ledger. It includes expense report analysis, network traffic monitoring, browser and endpoint visibility, SSO and OAuth log review, and department-level surveys. Most organizations find their actual AI tool footprint is 3-4x larger than what IT tracks.

The discovery phase is not about catching employees doing something wrong. It is about establishing the visibility foundation that makes every subsequent decision evidence-based, rather than political.

2. Classify: By Risk Level, Overlap, and Strategic Value

A flat list of 250 tools is not actionable. Each tool needs to be classified along three dimensions:

Risk level. What data does this tool handle? What are its training data policies, retention policies, and data sovereignty implications? Does it have enterprise-grade security certifications (SOC 2, ISO 27001, HIPAA compliance)? Is the employee using a consumer tier or an enterprise tier?

Functional overlap. Which tools serve the same purpose for the same user population? Twelve AI writing tools across twelve departments is a consolidation opportunity. One specialized AI tool for legal research, with no overlap to other functionality, is not.

Strategic value. Is this tool aligned with the organization’s AI strategy? Does it serve a use case that drives measurable business impact? Or is it a novelty that attracted one user, or a few, and went dormant? Usage data, not opinion, should answer this question.

3. Consolidate: Reduce Redundancy, Negotiate Enterprise Agreements

With classification complete, you can make informed decisions about what to keep, consolidate, or retire.

Negotiate enterprise agreements for tools that earned their place. Replace scattered individual subscriptions with centrally managed licenses. This single step often recovers 30-50% of tool spend, without removing a single capability.

Sunset redundant tools where multiple options serve the same function for the same audience. Migrate users, with clear communication and transition support, not with a surprise “Blocked” notice.

Bring shadow tools into the fold. If employees adopted an unsanctioned tool and it delivers clear value, evaluate it for enterprise deployment, and compare it to alternatives, rather than reflexively blocking it. The signal delivered by bottom-up adoption is valuable intelligence about what your workforce actually needs.

4. Govern: Approval Workflows and Ongoing Monitoring

Rationalization without governance unravels within months, as new tools enter the portfolio unchecked.

The AI Governance Guide describes a governance spectrum: Educate, Warn, Monitor, Restrict, Block. This flexible approach allows nuanced responses, calibrated to risk level, rather than a binary approved/blocked decision. Most organizations should operate in the middle: making sanctioned tools easy to find, requiring lightweight approval for new tools, and monitoring usage patterns continuously.

Build a fast-track evaluation process for new AI tools. If the evaluation takes six months, employees won’t wait. A 2-4 week assessment cycle that covers data handling, security posture, and functional overlap keeps governance from becoming a bottleneck.

5. Optimize: Usage-Based Decisions and ROI Tracking

Rationalization is not a one-time cleanup. It is a continuous optimization cycle.

Track actual usage against license allocation. Look at the full cost picture beyond license fees: integration costs, training time, productivity impact, and risk exposure. Tools with high license counts but low active usage are candidates for downsizing. Tools with small but passionate user bases generating disproportionate value are candidates for expansion.

Run 90-day review cycles. The AI tool landscape moves too fast for annual reviews. Every quarter, reassess your portfolio against current usage data, new market entrants, and evolving organizational needs.

The Decision Matrix: Keep, Consolidate, or Kill

When evaluating any individual AI tool, four criteria determine the decision:

High usage + high strategic value = Keep. Negotiate an enterprise agreement, ensure governance coverage, and invest in broader adoption.

High usage + low strategic value = Evaluate. Usage is high for a reason. Understand why before cutting. The tool may be addressing a need your more strategic tools don’t cover.

Low usage + high strategic value = Expand. The tool is valuable but under-adopted. Invest in training, enablement, and better integration before concluding it doesn’t work.

Low usage + low strategic value = Retire. Sunset the tool, migrate remaining users, and reallocate the budget.

For overlapping tools in the same category, run a structured bake-off with the actual user population. Let the people who use these tools daily evaluate which one serves their workflow best. Top-down tool selection that ignores user preference creates unsanctioned, “shadow” AI.

The CIO’s Dilemma: Control vs. Innovation

This is the tension that defines AI tool rationalization. Lean too far toward control and you kill the bottom-up experimentation that drives AI adoption and proficiency. Lean too far toward permissiveness and you create ungoverned risk, wasted spend, and compliance exposure.

The data is clear on what happens at both extremes.

Too restrictive: Organizations that respond to AI sprawl by blocking everything and mandating a single vendor per category see adoption stall. Employees stop experimenting. The organization falls behind competitors that allow AI proficiency to grow organically. And paradoxically, the most resourceful employees find workarounds anyway, driving usage further underground, where it is invisible to governance.

Too permissive: Organizations with no governance see AI spend balloon unpredictably, data exposure incidents multiply, and compliance audits become nightmares. When auditors ask, “What AI tools does your organization use, and how is data handled?,” the CIO has no credible answer.

The healthy benchmark from Larridin’s research is approximately 3-4% of total AI usage occurring in unauthorized tools. Zero percent means experimentation is dead. Twenty percent means governance is failing. That 3-4% range represents a posture where the vast majority of usage is governed and protected, while enough experimentation continues to surface new tools and capabilities.

The organizations that get this balance right treat governance as enablement, not restriction. Every “no” comes with a “yes, and here’s how to do it safely.” They move fast on tool evaluation so employees don’t have to choose between waiting and going rogue. And they invest in making their sanctioned tool stack genuinely excellent, because the best defense against shadow AI is approved tools that are actually better than the unauthorized alternatives.

This Is Not a One-Time Project

The single most common mistake in AI tool rationalization is treating it as a cleanup project with a start date and an end date.

AI tool sprawl is a recurring condition, not a one-time event. New AI tools and features launch weekly. Employees discover and adopt them continuously. Gartner predicts 40% of enterprise apps will embed AI agents by the end of 2026. The portfolio you rationalized last quarter will look different this quarter.

Build rationalization into your ongoing governance rhythm:

- Monthly: Review new tool discoveries and conduct fast-track evaluations

- Quarterly: Full portfolio review against usage data, overlap analysis, and spend optimization

- Annually: Strategic reassessment of vendor relationships, enterprise agreements, and category-level decisions

The organizations moving from experimentation to execution in 2026 are the ones that treat AI tool management as a continuous discipline, not a project that gets checked off and forgotten. VCs predict that enterprises will spend more on AI this year, but through fewer, more strategically chosen vendors. The CIOs who have a rationalization framework in place will capture the efficiencies and enhancements that are becoming available. The ones who don’t will keep discovering the 200-tool surprise.

Larridin gives CIOs complete visibility into every AI tool in use across the enterprise – sanctioned and unsanctioned – with adoption depth, usage patterns, and overlap analysis that makes rationalization decisions evidence-based rather than political.

Talk to us about rationalizing your AI tool portfolio –>