AI proficiency is the measurable capability gap between employees who merely use AI and those who extract transformational value from it — and closing that gap is the single highest-leverage move an enterprise can make in 2026.

TL;DR / Quick Definition

AI proficiency is a multi-dimensional measure of how effectively a person uses AI tools to produce real business outcomes — not just whether they use them at all. It spans how people think about AI, interact with it, select tools, and ultimately, whether they can delegate complex work to AI systems that act autonomously. If AI adoption tells you who’s logged in, proficiency tells you who’s actually getting value.

Why AI Proficiency Matters in 2026

The enterprise world has spent the last two years celebrating adoption numbers. And on the surface, those numbers look great. EY’s 2025 Work Reimagined Survey found that 88% of employees use AI in their daily work. That sounds like mission accomplished.

It isn’t. Not even close.

That same EY survey revealed that usage is overwhelmingly limited to basic search and summarization. Only 5% of employees are using AI in advanced ways that transform how they work. The other 83% are doing the digital equivalent of using a smartphone only to make phone calls.

The gap between average users and power users is not marginal — it is staggering. OpenAI’s 2025 State of Enterprise AI report documented a 6x engagement gap between AI power users and typical employees. Same tools. Same organization. Same access. Four times more engagement reported by people who know how to use AI well.

Meta’s internal data tells the same story in sharper relief. The company reported a 30% increase in output per engineer overall from AI tools — but power users saw an 80% year-over-year increase. Nearly three times the average. As Mark Zuckerberg put it, projects that once required entire teams are now accomplished by a single, highly proficient individual.

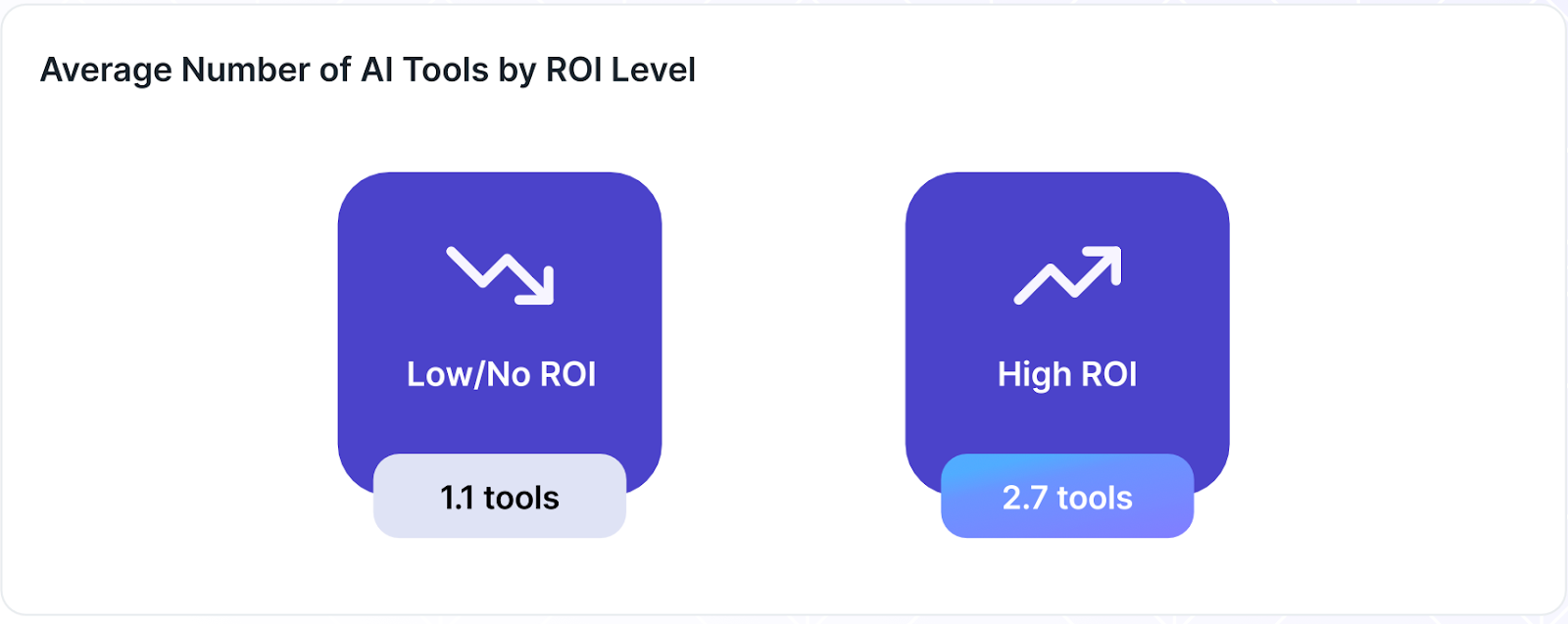

More advanced users also use more AI tools. Larridin’s report, The State of Enterprise AI 2026, found that users with a high expectation of achieving ROI used an average of 2.7 tools each. Those with a low expectation used an average of 1.1.

Figure 1. ROI confidence and visibility confidence.

(Source: The State of Enterprise AI 2026)

This is the proficiency gap. And if your organization is only tracking adoption, you are measuring the wrong thing.

Core Framework & Key Concepts

Sam Altman’s Three Buckets

Sam Altman has described three distinct modes of AI usage that map cleanly to enterprise proficiency levels:

- Bucket 1 — The Google Replacement. Simple question-in, answer-out. The user treats AI as a search engine. Productivity gain: modest, maybe 5-10%.

- Bucket 2 — The Augmented Worker. AI is integrated into actual work — drafting, analyzing, brainstorming, iterating. The user treats AI as a thinking partner. Productivity gain: meaningful and compounding.

- Bucket 3 — The AI-Native. The user approaches every problem AI-first, orchestrates complex setups across tools and files, and reimagines what one person can accomplish. Productivity gain: transformational.

The jump between these buckets is not linear. It is exponential. Most enterprise employees sit in Bucket 1 today. The ROI of your AI investment depends on moving them up.

The Nine Dimensions of AI Proficiency

Proficiency is not a single skill. It spans nine measurable dimensions:

- Model Intuition (understanding what AI can and cannot do)

- Interaction Sophistication (multi-turn, context-rich dialogue vs. single-shot queries)

- Multi-Model Fluency (leveraging different models for different tasks)

- Tooling Sophistication (using MCP, APIs, connectors, and integrations beyond basic chat)

- Right Tool for the Right Job (selecting specialized AI tools over general-purpose LLMs)

- Use Case Diversity (applying AI across research, writing, analysis, coding, and creative work)

- New Tool Adoption Speed (keeping pace with the rapidly evolving landscape)

- Agentic Capability (delegating complex tasks to autonomous AI agents)

- Habit Consistency (sustaining proficient behavior over time, not just spiking after a training session)

Together, these dimensions capture the full picture of AI fluency — something no single metric or survey question can approximate.

The AI Proficiency Spectrum (5 Levels)

These dimensions map to a five-level proficiency spectrum:

- Search Replacer — Uses AI as a better Google. Single-shot queries. One tool. ~5-10% productivity impact on limited tasks.

- Task Automator — Uses AI for specific repetitive tasks (email drafts, summaries). Better context but still transactional. ~15-25% impact on targeted tasks.

- Augmented Worker — AI is a genuine work partner. Multi-turn conversations, multiple models, several use cases. ~30-50% impact across activities.

- Power User — Fluent across models and tools. Leverages advanced capabilities (MCP, APIs, custom instructions). AI is embedded in how they think. ~50-100%+ impact.

- AI-Native Orchestrator — Operates at the agentic frontier. Delegates to autonomous AI agents. Reimagines what one person can accomplish. Impact is transformational.

Most enterprise employees today sit at Level 1 or 2. The organizations that move a meaningful share of their workforce to Level 3 and above will capture disproportionate returns. Those that develop Level 5 talent will have capabilities their competitors cannot match.

Common Misconceptions

“High usage = high proficiency.” This is the most pervasive mistake in enterprise AI today. A user who fires off 50 basic queries a day is not more proficient than one who runs three deeply sophisticated, multi-turn sessions. Frequency of use and quality of use are entirely different variables. Vendor dashboards that count logins and interactions tell you nothing about proficiency.

“Self-assessment surveys can measure this.” They cannot. The Dunning-Kruger effect is alive and well in AI usage — beginners consistently overestimate their abilities, while genuine experts underestimate theirs. Any proficiency measurement built on self-reported data will produce distorted, actionable-in-the-wrong-direction results. Behavioral observation — analyzing how people actually interact with AI, not what they claim — is the only reliable approach.

“Proficiency is a fixed target.” What qualified as “advanced” six months ago is “baseline” today. The AI landscape moves faster than any technology in history. A static proficiency definition becomes meaningless within a quarter. Effective measurement requires continuous recalibration — ideally monthly — against the current frontier of what is possible, including AI impact intelligence and agentic capabilities that barely existed a year ago.

How It Connects

AI proficiency is the natural next step after AI adoption. Adoption asks whether your workforce is using AI. Proficiency asks how well. Impact, which we will describe in a future Guide, describes the organizational benefit.

Without proficiency measurement, high adoption numbers can mask a workforce stuck at the most superficial levels of AI usage — expensive licenses generating minimal return.

Proficiency also overlaps with and deepens AI fluency, which captures the ease and naturalness with which people interact with AI systems. And it feeds directly into AI execution intelligence — the organizational capability to translate AI proficiency into measurable business outcomes at scale.

For the complete framework — including detailed breakdowns of each proficiency dimension, enterprise measurement strategies, and a step-by-step guide to building an AI proficiency program — see the full Guide: AI Proficiency: The Complete Guide.

Larridin measures AI proficiency across nine dimensions, recalibrated every 30 days, giving enterprises a real-time view of how effectively their workforce uses AI — and exactly where to invest to move the needle.