You may formally have the title. Congratulations! Or you may just have the responsibility, under a different title. In either case…

Your board approved $50M in AI spending. Now they want proof it's working. But when you ask your teams what AI tools they're using, and what value they're delivering, the answers are vague, conflicting, and impossible to verify. You're flying blind in the largest technology investment wave in corporate history.

Key Takeaway

As Chief AI Officer (CAIO), your success depends on measuring what your AI investments actually deliver. While 26% of enterprises now have CAIOs—up from 11% two years ago—most still don’t have a clear way to measure AI impact, to prove ROI or scale successful initiatives. The organizations winning with AI aren't necessarily spending more. They're measuring better. Independent AI monitoring generates reliable data that helps scale pilot projects into enterprise-wide value.

Quick Navigation

- Why CAIOs Need Independent AI Monitoring

- What CAIOs Must Monitor Across the AI Stack

- The Five Layers of Effective AI Monitoring

- The Larridin Advantage: Independent Measurement at Enterprise Scale

- The CAIO Mandate for 2026

- Frequently Asked Questions

Key Terms

- AI Stack: Collection of AI tools an organization uses across different functions. High-performing organizations typically use multiple specialized tools rather than a single platform.

- AI Usage Telemetry: Data captured from actual AI tool usage across the organization—what tools people use, who uses them, and how often—without relying on surveys or vendor dashboards.

- AI Observability: Continuously monitoring and understanding how AI systems perform in production. Detects issues such as data drift, model bias, or performance degradation.

- Shadow AI: AI tools used by employees without formal IT approval or governance, typically through personal accounts or unsanctioned applications.

Why CAIOs Need Independent AI Monitoring

Your board wants one answer: What ROI are we getting from our AI investments?

According to IBM's 2025 global study, 61% of CAIOs control their organization's AI budget. The typical organization uses 11 generative AI models today and plans to use at least 16 by year end. Yet 81% of leaders report difficulty quantifying AI investments, and 79% cite untracked AI budgets as a growing accounting concern.

Here's the problem: your AI monitoring tool vendors want to grade their own homework. No enterprise CIO trusts OpenAI to give unbiased reports on whether GPT-5 or Claude performs better. You need an independent evaluation to know which is the better model for various purposes.

Impartial evaluation and optimization is critical. According to Larridin's State of Enterprise AI research, organizations with independent measurement capabilities are 2.2 times more likely to demonstrate ROI than those relying on vendor-provided metrics alone. The measurement gap has become the profitability gap.

What CAIOs Must Monitor Across the AI Stack

Your measurement strategy must address four interconnected dimensions.

1. AI Visibility and Discovery

The average enterprise now has 23 different artificial intelligence tools in use, and 45% of adoption happens outside formal IT procurement. According to Larridin's February 2026 research, more than 75% of workers use personal AI tools. This creates unsanctioned, “shadow AI” environments where successes are hidden and go unscaled, and security risks compound invisibly. Monitor which AI tools are in use, usage patterns by department, data flowing into AI systems, and real-time monitoring of active AI-powered models and agents.

2. Proficiency and Maturity

Utilization metrics tell you who logged in. Proficiency metrics tell you who's creating value. Larridin's research found that 85.7% of workers save 10 hours monthly or less through AI use, while the top 6% save 20+ hours. Track skill levels across roles, adoption depth (passive users vs. power users), capability gaps that prevent higher-value use cases, and organizational readiness for advanced AI applications, including agentic AI systems.

3. Token Management and Cost Optimization

Your AI budget requires token visibility to track actual consumption patterns and costs, not just license counts. Monitor total AI spend versus budgeted amounts, prompt efficiency and quality, license utilization, waste, and optimization opportunities through better prompt engineering. 22% of leaders cite tool overlap and redundancy as a top budget drain, but you can't optimize spending you can't see.

4. ROI, Impact, and Risk

According to Larridin's research, 24.4% of organizations admit they have no correlation between AI usage and business outcomes. In other words, they're tracking logins, not value. You need to track business outcomes tied to AI initiatives, risk exposure levels, risk mitigation effectiveness, compliance with internal policies and external regulations, and strategic readiness. Even as AI adoption spreads, governance and measurement lag. Only 25% report fully implemented AI governance programs, and many still don’t track outcomes.

The difference between vendor evaluation metrics and what CAIOs need is fundamental. OpenAI measures model performance: latency, tokens, error rates. Larridin Scout measures organizational fitness: Did the human get more productive? Did the workflow improve? Did we save money? These answer the CFO's ROI questions, not just the engineer's latency questions, with end-to-end AI monitoring.

The 5 Layers of Effective AI Monitoring

Leading CAIOs build monitoring infrastructure across five architectural layers.

- Technical Layer: Monitor CPU and GPU utilization for AI workloads, latency and response times, bottlenecks in pipelines, and model accuracy. According to Gartner, by 2026 more than 80% of enterprises will have used GenAI APIs or models and/or deployed GenAI-enabled applications in production environments.

- Operational Layer: Track who uses AI systems, how often, and for what purposes. Detect shadow AI through comprehensive discovery across sanctioned and unsanctioned tools.

- Strategic Layer: Connect AI usage to business outcomes—time saved per user, revenue impact from AI-augmented processes, cost reduction through automation, and quality improvements.

- Governance Layer: Monitor data exposure and privacy violations, policy compliance and audit trails, model behavior including bias and hallucinations, and security risks from AI tool proliferation.

- Intelligence Layer: Use AI to monitor AI impact with automated correlation, predictive alerting, recommendations for optimization, and continuous learning. According to LogicMonitor’s 2026 observability and AI outlook, autonomous IT is becoming the leading 2026 operational requirement.

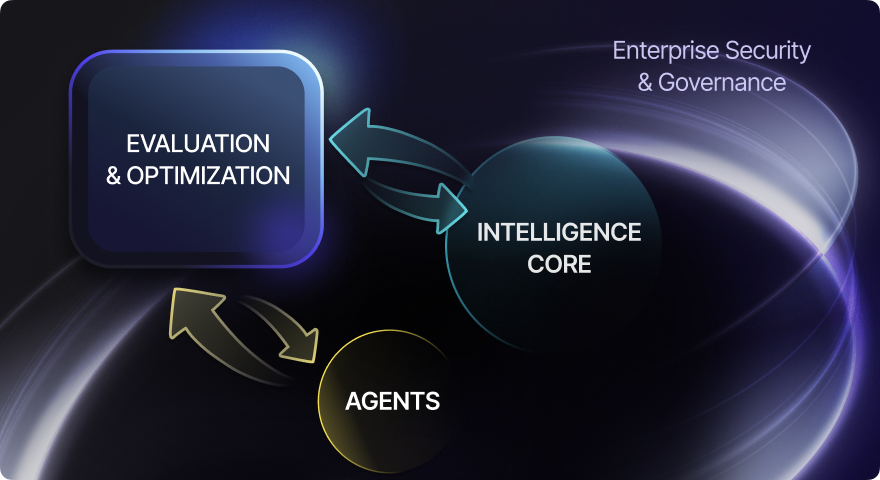

The Larridin Advantage: Independent Measurement at Enterprise Scale

Larridin is the independent AI impact measurement platform. We quantify usage, proficiency, and impact across humans and agents, and enable trusted AI governance at scale.

What makes independent measurement different:

- Vendor Neutrality: Measure performance across all AI tools, without bias toward any specific model or vendor.

- AI Usage Telemetry: Aggregated monitoring shows what AI tools are actually being used—not just what’s licensed.

- Business Impact Focus: Measure AI across five dimensions: governance, velocity, effectiveness, quality, and impact.

- Proficiency Measurement: Track skill development and identify beginners versus power users.

- Complete AI Stack Visibility: Unified visibility across LLMs, agents, and AI workloads as organizations average 2.7 tools for high-ROI performance.

The CAIO Mandate for 2026

Research shows that 88% of business executives are increasing AI budgets in the next 12 months due to agentic AI. But budget increases without measurement infrastructure drive expensive chaos, not competitive advantage.

Your mandate as CAIO:

- Deploy independent measurement

- Move from utilization to proficiency measurement

- Connect every tool, task, and team to business results

- Build governance into measurement infrastructure

- Establish vendor accountability with objective performance data

The leaders of 2026 will not be those with the biggest AI budgets. They will be those with the most accurate AI insights.

Frequently Asked Questions

How does Scout handle scalability as our AI deployment grows?

Scout is built for enterprise scalability from day one. The platform scales horizontally to monitor thousands of users across multiple AI tools simultaneously without performance degradation.

Whether you're tracking 100 employees or 100,000, Scout's architecture handles data ingestion and analysis in real-time. As your AI deployment expands to new departments, geographies, or use cases, Scout automatically discovers, and begins monitoring, new AI agent activity without manual configuration or additional infrastructure.

What performance metrics does Scout track for AI monitoring?

Scout tracks comprehensive performance metrics across five dimensions. For AI agent monitoring, Scout measures response times, completion rates, and interaction patterns. For application monitoring, the platform captures which AI tools are active, session duration, and feature utilization.

Scout also quantifies business impact through productivity gains, time savings per user, and cost per AI interaction. These performance metrics connect technical AI deployment data to business outcomes, enabling data-driven decision-making about which tools and workflows deliver the highest ROI.

How quickly can Scout deploy monitoring solutions across our organization?

Scout typically deploys in about one day for initial implementation. The browser-based monitoring and desktop agents require minimal IT involvement. Users can download and install Scout, and the platform immediately begins discovering AI tools and capturing usage data.

Within the first week, you'll have baseline visibility across your AI landscape. Within 30 days, Scout provides comprehensive analytics on utilization patterns, proficiency levels, and shadow AI discovery. This rapid deployment means you can start making informed decision-making about AI investments almost immediately, rather than waiting months for traditional monitoring solutions to be configured and tuned.

Does Scout integrate with our existing AI tools and infrastructure?

Yes. Scout is designed for vendor-neutral monitoring across your entire AI stack. The platform monitors AI agent activity regardless of whether you're using ChatGPT, Claude, Gemini, Copilot, or specialized domain-specific AI tools.

Scout captures data at the application level through a centrally distributed browser plug-in, so it works with both sanctioned enterprise AI deployment and shadow AI tools, without API access or vendor integration. Vendor independence ensures that you get objective monitoring solutions, measuring actual usage and business impact across all your AI investments.

What makes Scout's approach to AI monitoring different from vendor dashboards?

Vendor dashboards show you what their AI agent did; Scout shows you what your people accomplished. Vendor monitoring solutions track technical performance metrics such as latency and token consumption. Scout measures organizational performance: productivity gains, proficiency development, workflow improvements, and ROI. Vendor tools only see their own AI deployment.

Scout provides unified visibility across your entire AI stack, enabling informed decision-making about which tools deliver value. Most critically, Scout provides independent data ingestion and analysis, so you're not relying on vendors to grade their own homework when evaluating AI agent performance and business impact.

Are you ready to transform your AI investments from expense to competitive advantage?

About Larridin

Larridin is the independent AI impact measurement platform that quantifies usage, proficiency, and impact across humans and agents, which enables trusted AI governance at scale.